Evolving Dark Energy and AI

where I experience viscerally what everyone is talking about

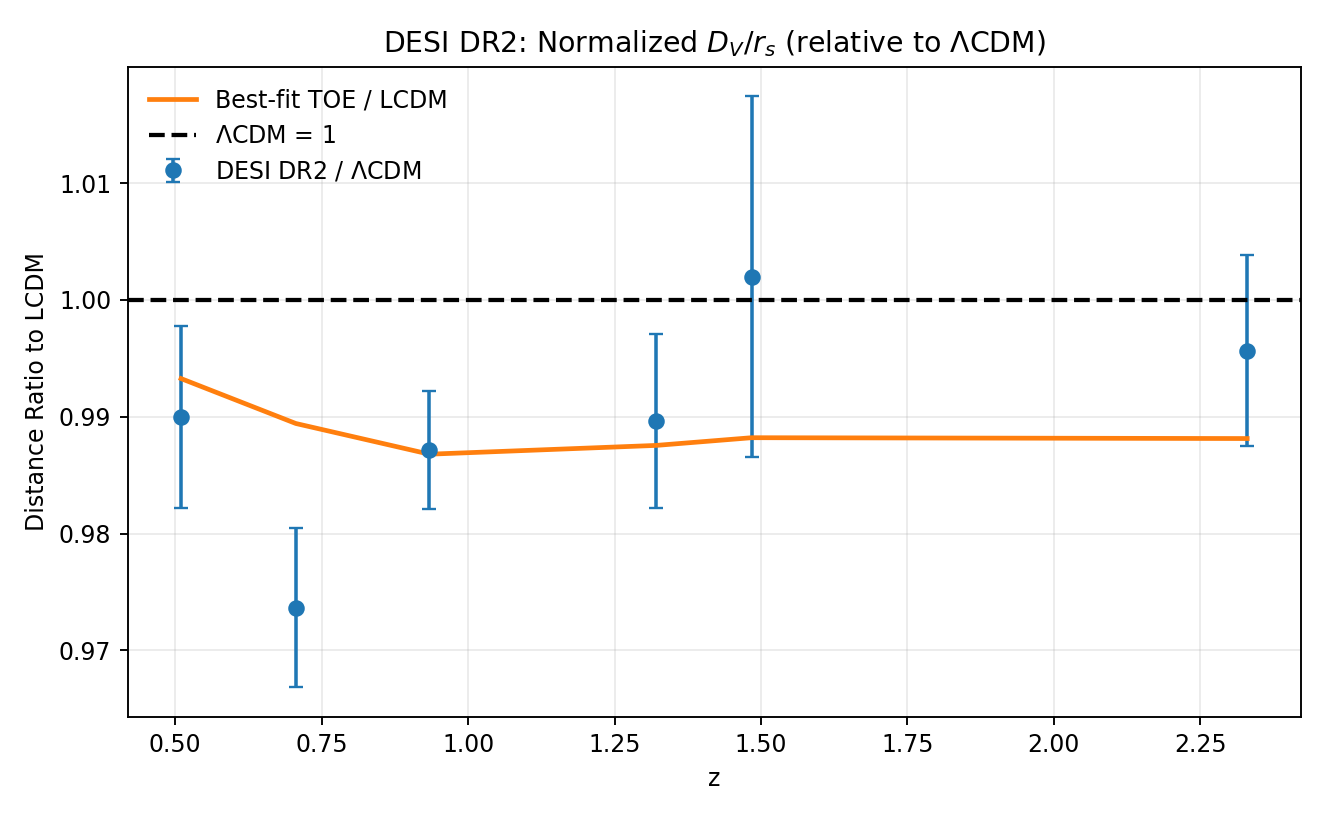

Over the past two years, evidence has been building that the dark energy is not a cosmological constant, the fiducial assumption of the last few decades. The blue dots in the plot below show one version of the problem: these are measurements by the Dark Energy Spectroscopic Instrument (DESI) and with one or two exceptions, they are all lower, significantly lower than the prediction of the fiducial model, called LCDM, the dashed line. Forget about all the details; I’ll try to explain those in coming posts. You can see that there is a problem. In these posts, I have been trying to both present the evidence systematically in a way that interested folks can understand and gain some insight into even deeper questions, such as how we change our beliefs. For now, I feel compelled to pause on the systematic part of this program because I’ve been blown away by Agentic AI and want to share that visceral experience.

In the year 2000, cosmologists had discovered that there was dark energy, something driving the accelerated expansion of the universe. We were trying to figure out what it was. The cosmological constant was the favored idea, but there are theoretical issues that made everybody uncomfortable with that idea, so people were searching around for new ideas. There were literally thousands of papers proposing ideas, or models, for dark energy. Ewan Stewart, a postdoc at Fermilab at the time, came up to me and said something that turned into part of the abstract for a paper we wrote: “We propose that the dark energy has periodically dominated in the past so that its preponderance today is natural. We illustrate this paradigm with a model potential and show that its predictions are consistent with all observations.” The “we” there was Ewan and another postdoc Manoj Kaplinghat (who was proficient at coding and comparing to observations), and me. The idea was to solve a mystery: why is dark energy important only now? Why didn’t it show up earlier in the history of the universe? Since Copernicus, we have been very uncomfortable with the idea that we are living in a special place or time. Our answer was to build a model of dark energy such that now is not a special time: our form of dark energy appears from time to time in the history of the universe, and we just happen to be living at one of the times when it emerges.

The abstract says that our model is consistent with all observations, and we did a lot of work to show that this was true, but observations have gotten a lot better, so it the model still viable? Even more interesting, could the model explain the recent evidence that the dark energy may not be a cosmological constant? Could our model be the true model of dark energy?

That would be a good project for a first-year graduate student:

Read our initial paper: given their other commitments, a student could understand the paper in a month.

Read the simplest paper that articulates the evidence against the cosmological constant: That would take quite a while unless the student had a solid cosmology background. At least another month.

Do the work: code up our model, which has 3 free parameters. At least two months.

Get all the relevant data, which is from different sources, and learn how to use the data, the formats, etc. Two months.

Develop a method that compares the model against the data, and then run the comparison for thousands of different parameters sets. Three months.

Conservatively it would take a grad student a year with at least weekly meetings with me to be able to write a paper about this.

You’ve probably guessed the punchline by now. A few days ago, I typed into codex: “i wrote a paper 25 years ago with a model for evolving dark energy, https://arxiv.org/abs/astro-ph/0002360. Can you write code that chooses parameters in that model so that it gets distances consistent with the latest DESI results [using CMB LCDM parameters]?” In about 5 minutes, it produced the plot above. The orange curve is the prediction of our model, after codex read the paper, coded up the model, downloaded the DESI data, and scanned hundreds of sets of parameters to find a best fit. FWIW, our model does indeed fit the data better than LCDM (with two more parameters).

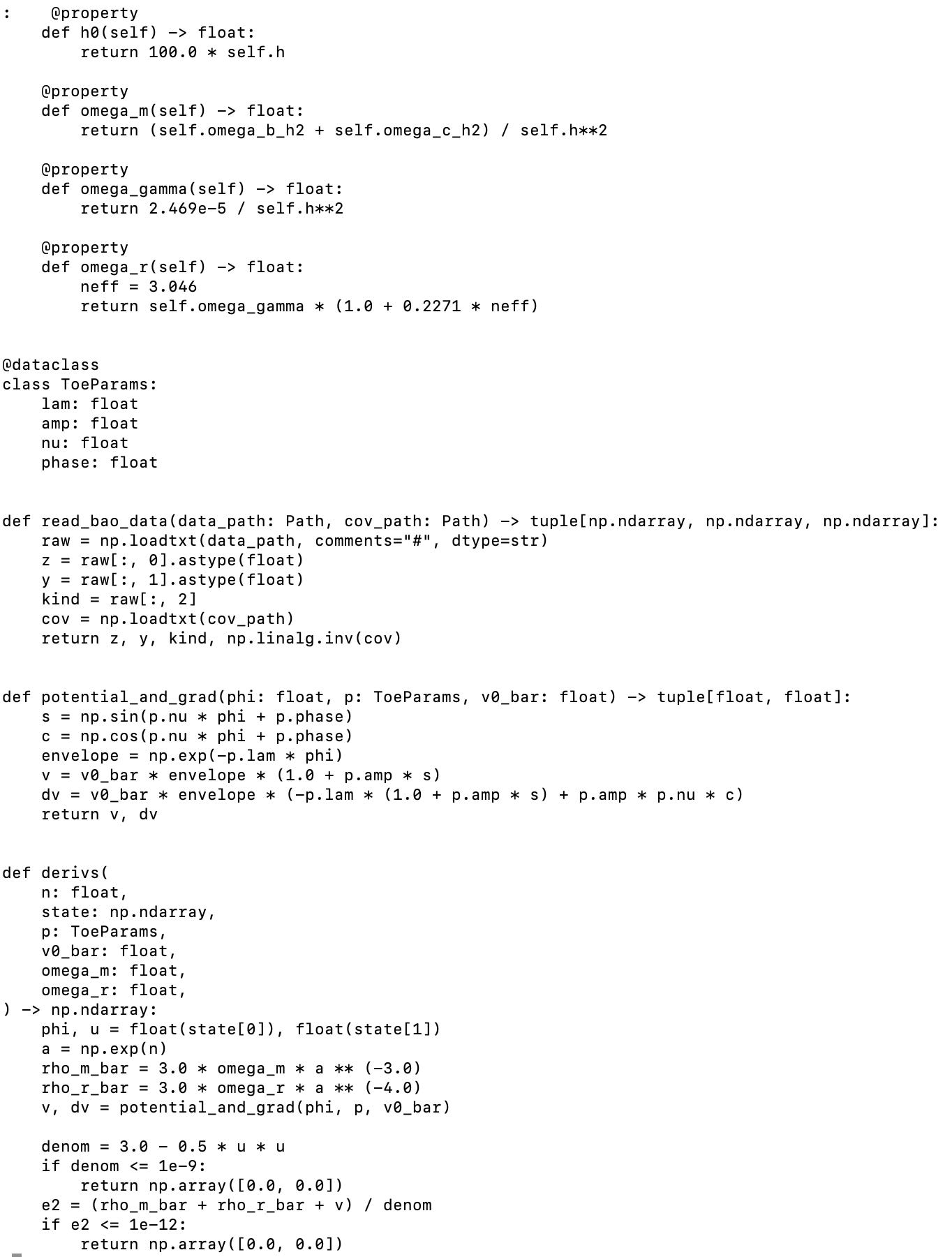

Here’s a snippet of the hundreds of lines of code it wrote in a few seconds

Full disclosure, I did ask it to tweak a few things, so the total time I spent on this was not 15 seconds, but more like a full minute. In that time, codex set up a nice directory structure, with code, plots, and data. And, I couldn’t resist the temptation, yes it also wrote a paper. I was too shaken to ask it to improve the paper, which isn’t great right now, as we all know that these models are very good at writing, and that could be done very easily.

And yet, I asked the computer a question, a question that no one other than a trained cosmologist would even understand how to approach. And it gave a solid result in a few minutes. I referred to the recent DESI results and used jargon (“using CMB LCDM parameters”) as if I was talking to a seasoned cosmologist, without explaining what I meant. And it knew. What it did in a few minutes is what I have spent most of my career doing. It took us about a year to write our initial paper. So all of that time now will be used for … something else.

It was a powerful, visceral experience, one that will take a long time to process.

I don’t want to overstate what it accomplished. To turn this into a quality paper requires more than just polishing text: First, everything has to be tested. Even if all of its calculations are correct, there is the question of whether the model did what intended: how did dark energy behave in the distant past? Were there other epochs when it was relevant? If so, did it impact anything we can observe? E.g., does it agree with the precise measurements of the cosmic microwave background (which I was planning on describing this week)? Are the parameters of the model realistic? What are the implications for fundamental physics?

I want to sit with the experience but can’t resist closing on an optimistic note. It is possible that this technology will both help all scientists and make people like me more relevant. While a graduate student starting from scratch could have done this in a year, I have a lot of experience so probably could have done this with a solid week of work. The problem is I never am able to carve out a full week of work. My days are broken up into 30 minute chunks, as are those of many people at my career stage. This tool will enable us to get stuff done without sweating details like file formats and locations. Also, go back to that list of things a graduate student would have to master. Well, they still need to learn a lot of that stuff: they need to learn how to read a paper; understand data; and learn how to compare models against data. This tool will give them more time to focus on the hardest, most important parts of that challenge. And they will need senior people to guide them, to help them reach the point where they understand when a paper is ready and when it is not. Bottom line: yes, I am in awe; no, I do not have any certainty about what the future will bring; but maybe, there is hope that this will improve our work-lives.

I agree with your sentiment that these intelligent tools, when prompted by experts with deep knowledge, can unleash a new creative process! And this stimulates questions from other experts! So here’s mine: Does your scalar field require a tuning potential V(phi) to match the data? If so, would a massive scalar field which acts similarly to an inflaton, provided by some f(R) gravity theory with additional coupling constants, solve the problem a natural way?

Couldn’t agree more. In my CMB work, modern AI tools are easily a 10x multiplier. It baffles me when I hear fellow cosmologists say they don’t use them. I've found Claude Code is currently superior to Codex for this cosmological likelihood stuff.